Humble Counter

Or how I got suckered into a horrific idea by Claude.

I’m a big fan of D&D.

I love the open, vibrant, reactive world. I love interacting with a colorful cast of characters. I love finding creative solutions to problems via the flexible game mechanics and narration. The strength of DND lies in the interplay between the narrative creativity of the GM and the harsh rigor of dice-rolls and deterministic rules.

Conversely, I find that online RPGs suffer from excessive determinism. Sure, you can pull off intricate combos or utilize deep strategy in turn-based RPGs, but you can rarely talk the dragon out of eating you by convincing it you have 50 salted T-bone steaks back at home. And text-based adventure games notoriously unforgiving with word choice and infamously require ridiculously specific solutions to succeed.

I was discussing plans to start a new campaign with some friends at a local bar when I had the following thought:

What if you could use an LLM to run a game of D&D in your terminal?

Enter, AUTO-DUNGEON.

Auto-dungeon was my grandiose attempt at designing such a creation. Auto-dungeon would be a text-based adventure game in the vein of D&D 2e dungeon-crawls, using an LLM-powered engine to interpret the player’s actions, update the game state accordingly, and narrate the outcomes back to the player as new story beats.

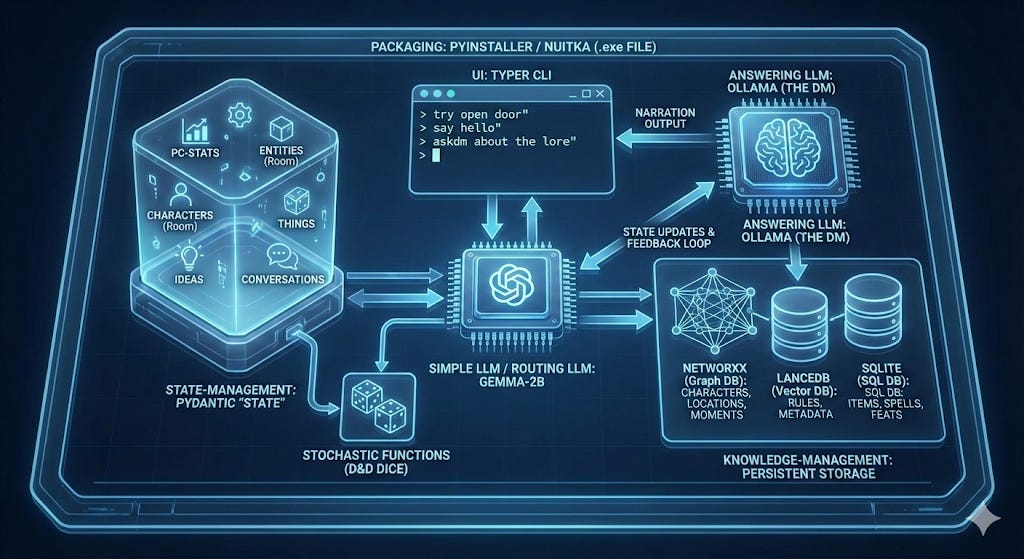

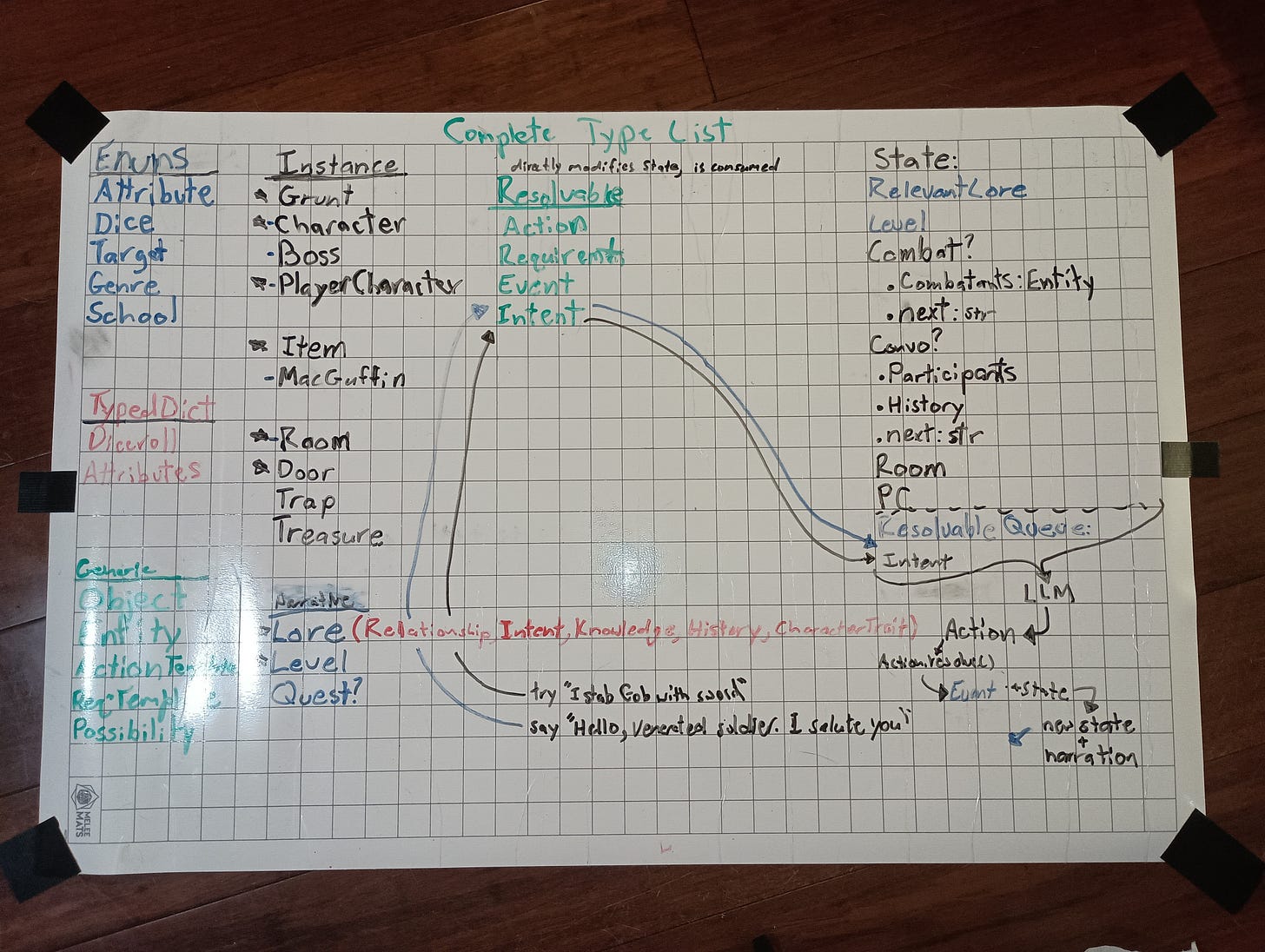

It would have:

Persistent state, consisting of a hierarchical tree with tens if not hundreds of nodes.

SQLite storage for Stats and rules relating to the hundreds of different items, rooms, monsters, and characters that would populate the game world.

A Graph DB and a separate Vector DB for lore snippets (plot, characters, relationships, etc.) and general game rules. (LanceDB and NetworkX)

A “low-cost” routing LLM for validating user prompts, augmenting them with context from the aforementioned databases, and routing them to.

An answering LLM which would update the state according to the player’s declared action.

I was going to replace the many action-words traditionally used in MUDs with just 2: try and say (there was also askdm and stats for gathering info). And my trusty open-source LLM would interpret these actions as updates in the game world! That’s right, I said open source. I wasn’t going to turn to fancy, expensive LLM, APIs, no! My game was going to be powered entirely in-house by the open-source engine Mistral-7b. (Yes, I know DeepSeek is open-source, it’s also so large that trying to download and run it would fry my puny GPU.)

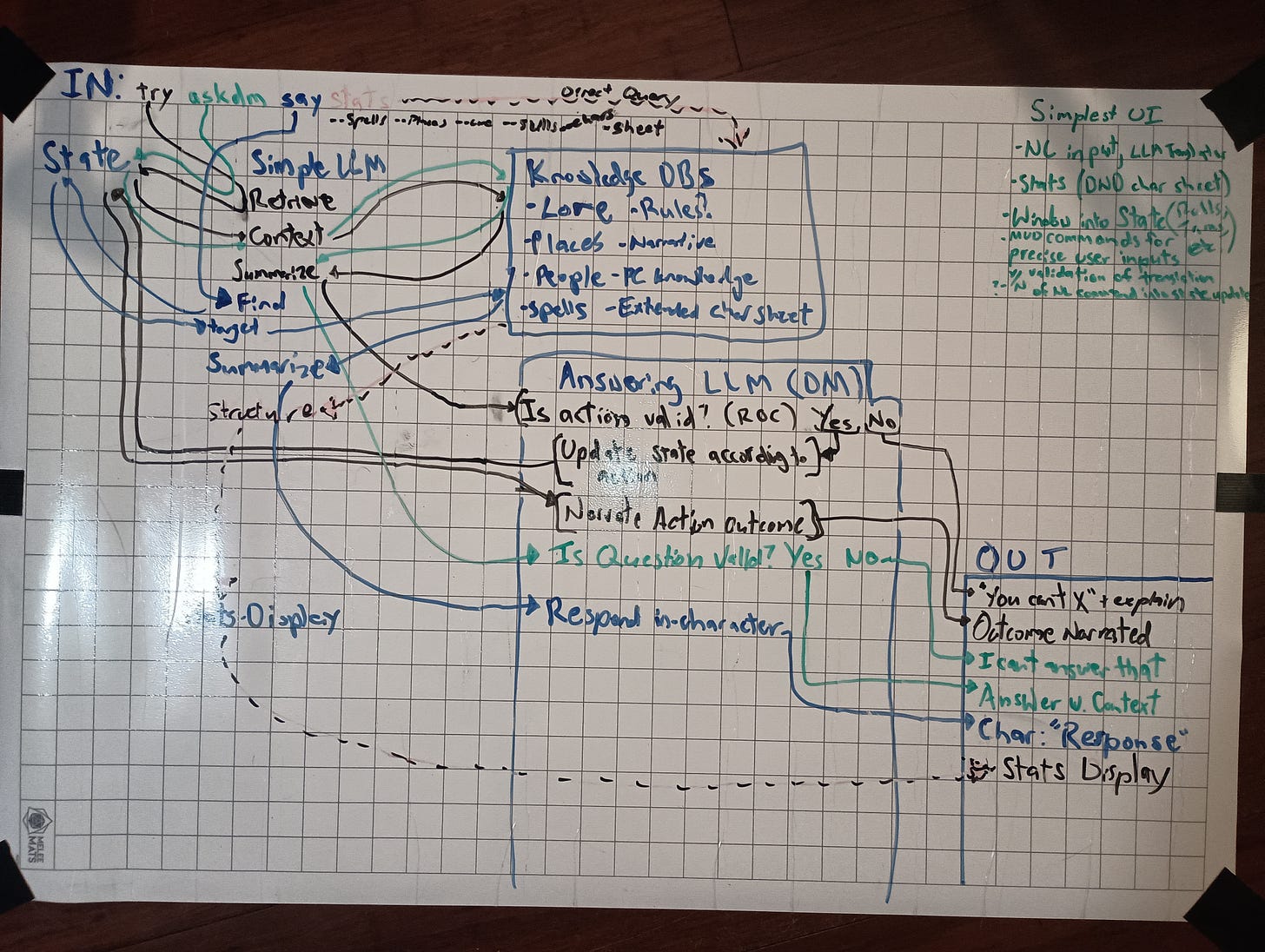

Project Architecture

(Architecture diagrams made by me on top, and Nano-banana Pro on bottom. These diagrams reflect how the project was going, and how I felt it was going, respectively.)

Unfortunately, my grand plan had some holes in it.

State is structured, LLMs are not. It is not easy to convince an LLM to output structured state, even if it understands the state being passed into it.

Large state quickly becomes un-manageable LLM context. The issue is that the LLM has to understand not only what’s going on in natural-language, but also how to translate that natural-language into state. After all, what’s going to translate the natural-language update into an update in state? An LLM. Once I understood how daunting it would be to translate State into context, I scrapped the Graph-Vector and SQL databases. Even the GameState alone, describing just the room the PC is in and his nearest enemies, became a massive JSON tree that was a headache for Mistral-7b to understand.

Managing the flow of state in this game is difficult. How does the game decide when an enemy should take their action? Do players or enemies get to react to player actions? What if an action has consequences on the state that might cause another action to occur?

And the fundamental issue?

LLMs absolutely suck at updating deterministic state.

The whole point of LLMs is to create complex, flexible, natural language responses. The whole point of state is to be so standardized and unambiguous that any simple parser can read it.

Using an LLM to update state is like commissioning James Joyce to fill out your forms at the DMV.

Sadly, auto-dungeon was just that. A fanciful idea I had at the bar that only amounted to a Python graveyard on GitHub.

With that in mind, I really wanted to take something away from this lesson on state management with LLMs. Something to really show-case the raw, brazen power of an LLM put to work doing the most banal, frivolous computational task imaginable. So now, I present to you…

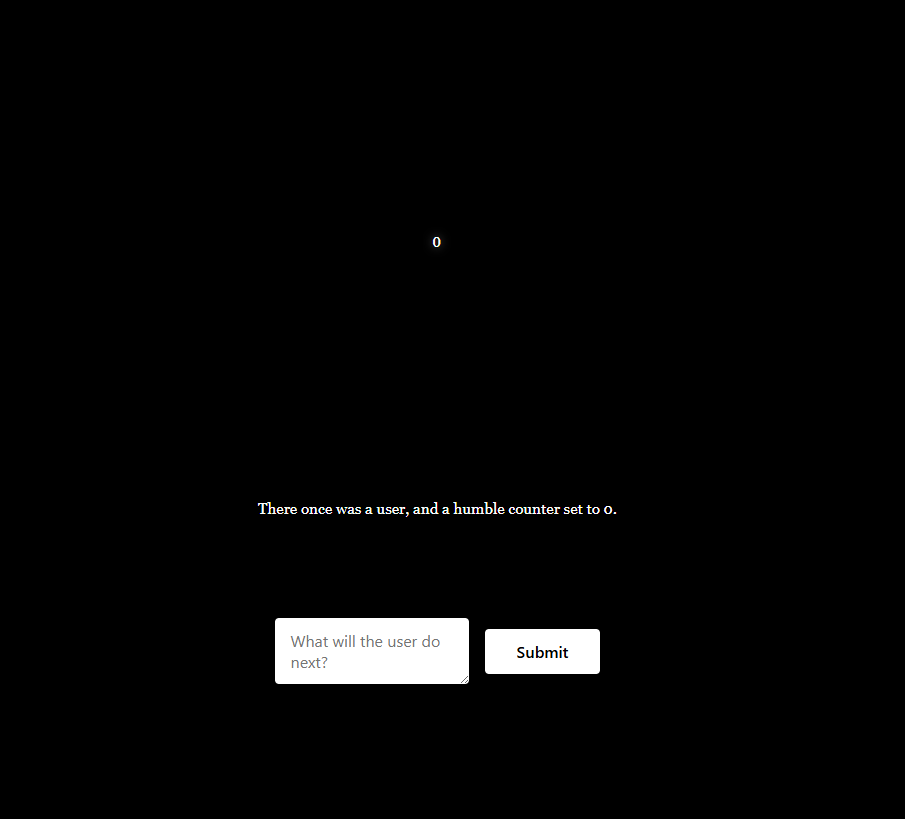

Humble Counter

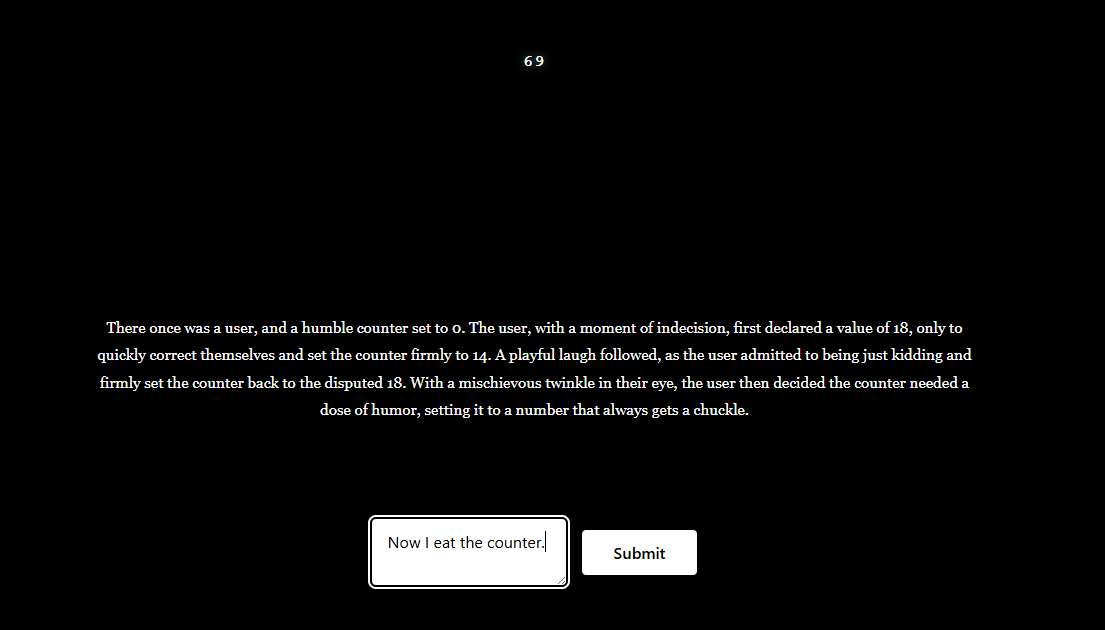

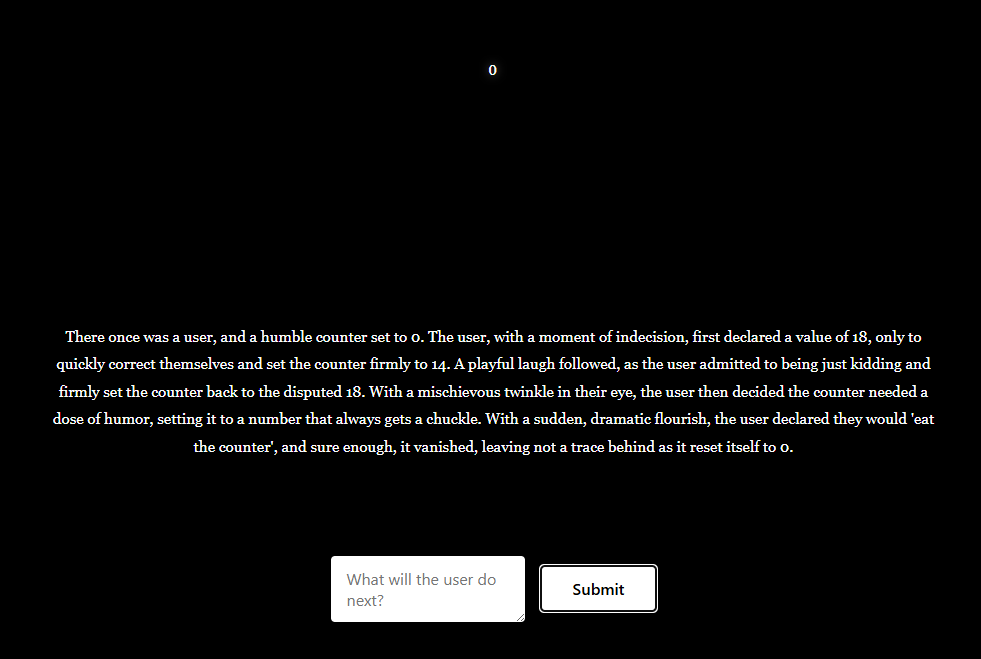

Updating a single counter is a classic showcase in React. What makes this counter special is how it gets updated. Your input gets routed through a prompt augmenter to Gemini-2.5-flash, which updates the counter based on your input. Gemini also returns an ongoing story about the user and their humble counter. This allows for some nice flexibility with regards to what can be done with the counter.

Now, is burning a gemini-2.5-flash call to update a single counter overkill? Yes, yes it is. But I made what I set out to make (at least mechanically), and I think there’s something a little bit beautiful about this design.

Thanks for reading, and if you have any questions or topics you’d like me to elaborate on, let me know in the comments!